I just read this interesting article "The End of RAG?" which discusses the evolution of Retrieval Augmented Generation (RAG) in the context of large language models (LLMs).

Donato Riccio's Viewpoints:

- RAG, a workaround for LLMs' limited context, may soon be outmoded due to model architecture and scale advancements.

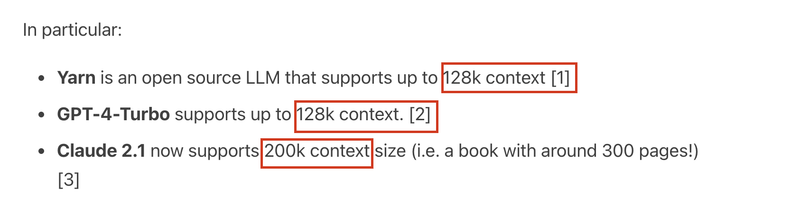

- Predicts RAG will be unnecessary as LLMs scale context sizes, citing Yarn, GPT-4-Turbo, and Claude 2.1, which support much larger contexts.

- Highlights new architectures like Mamba and RWKV, offering linear scaling over transformers' quadratic complexity, potentially replacing them.

You can read the full article and share your thoughts using this link: The End of RAG?

Note:

Transformers, Mamba, and RWKV are all architectures used in machine learning, but they differ in their approach and efficiency:

- Transformers: Known for their self-attention mechanism, they excel in understanding the entire context of the input data. However, they have a quadratic computational complexity, which can be a limitation for longer sequences.

- Mamba: Aims to replace the Transformer architecture by using structured state space models (SSMs) with a selection mechanism. This allows for linear scaling and efficient handling of longer sequences, overcoming the quadratic complexity of Transformers.

- RWKV (Recurrent Weighted Key Value): Combines the strengths of RNNs and transformers. It utilizes a linear attention mechanism for efficient memory use and inference, achieving linear scaling. This makes it more efficient for long sequences compared to Transformers.

Each has its strengths, Transformers are powerful but less efficient for long sequences, while Mamba and RWKV offer more scalable solutions for handling longer data sequences.

Top comments (0)